Exploring Event-Based Cameras: A New Approach to Machine Vision

- Edson Murillo

- 2 days ago

- 5 min read

Traditional cameras remain the standard choice for many vision applications, but they are not ideal for every environment. When motion is fast, lighting is extreme, or reaction time matters, frame-based sensing starts to show its limits. Event-based cameras offer a different approach: instead of capturing full images at fixed intervals, they detect and transmit only changes in brightness as they happen.

That shift changes how visual systems sense motion, handle dynamic scenes, and manage data. For companies building robotics, industrial automation, smart mobility, medical devices, or other edge AI systems, event-based vision is becoming an increasingly practical technology to evaluate.

What Is an Event-Based Camera?

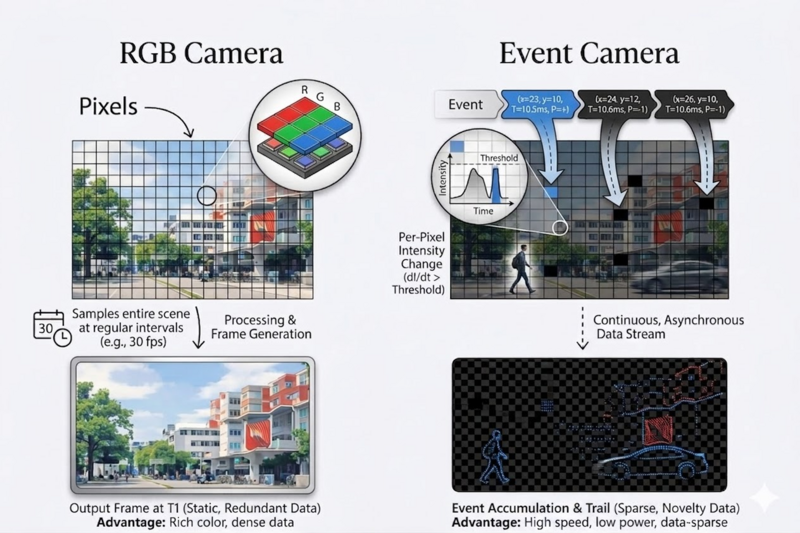

A conventional camera samples the entire scene frame by frame. Even if nothing changes, it still captures and transmits full images. An event-based camera works asynchronously at the pixel level. Each pixel continuously monitors light intensity and generates an event only when it detects a significant change in brightness.

Each event contains three basic elements:

pixel location

timestamp

polarity, indicating whether brightness increased or decreased

The result is not a sequence of image frames, but a sparse stream of time-stamped changes. Static regions produce little or no output, while moving edges and dynamic transitions generate activity exactly where change occurs.

This is the core difference between event-based cameras and frame-based cameras. Event cameras are not simply faster conventional sensors. They represent a different sensing model, one that emphasizes motion, timing, and relevant change instead of repeated full-scene capture.

Why Event-Based Vision Matters

The value of event-based cameras becomes clear in scenarios where traditional cameras struggle.

Frame-based systems can suffer from motion blur because they integrate light over a fixed exposure period. They can also have difficulty in high dynamic range scenes, where very bright and very dark regions appear at the same time. And when lower latency is needed, increasing frame rate usually increases bandwidth and processing requirements as well.

Event-based cameras address these issues differently. Because they react to brightness changes asynchronously, they offer:

High temporal resolution

Low latency

Reduced redundant data

Minimal motion blur

Strong performance in scenes with large illumination differences

That makes them well suited for applications where the timing of visual change matters more than reconstructing a dense image at every moment.

How Event-Based Cameras Work

Event-based vision is often described as retina-inspired. Instead of repeatedly transmitting complete images, the sensor processes changes locally and reports meaningful visual activity as it happens.

At the sensor level, each pixel continuously evaluates intensity variation over time. When the change in logarithmic intensity crosses a predefined threshold, the sensor generates an event. If brightness increases, the sensor emits an ON event. If brightness decreases, it emits an OFF event.

Because events are generated only when changes occur, the data is naturally sparse. Static parts of a scene generate no events, while motion and transitions create clusters of activity. For visualization and analysis, the event stream can be accumulated over short time windows into frame-like representations, but the native output remains asynchronous and event-driven.

One important detail is often missed: an event camera can react almost immediately to visual changes, but real application responsiveness still depends on the full software and hardware pipeline. Transport, host processing, buffering, and downstream algorithms still matter. In other words, the sensor may be extremely fast, but system-level performance depends on how the full embedded solution is designed.

Where Event-Based Cameras Fit Best

Event-based cameras are not a replacement for every conventional image sensor. They are better understood as a complementary technology.

According to RidgeRun’s Event Cameras Developer Guide, they are especially useful in scenarios involving:

fast motion

strict low-latency requirements

high dynamic range environments

low-light or high-contrast conditions

sparse signal detection

Typical application domains include robotics, automotive systems, industrial automation, surveillance, and scientific research. Some use cases include high-speed tracking, optical flow, vibration analysis, particle tracking, and sterility monitoring in cell therapy workflows.

For clients, the practical question is not whether event-based cameras are better in general. It is whether they solve a specific problem better than a frame-based approach.

The Trade-Offs You Should Understand

Event-based cameras have clear advantages, but they also come with real constraints.

They perform poorly in static scenes because no brightness change means no events. They do not directly provide absolute intensity like conventional cameras, so image reconstruction requires additional processing. Many existing computer vision algorithms were designed for synchronized image frames, which means event-based pipelines often require specialized adaptation. The ecosystem is improving, but it is still less mature than the broader frame-based vision stack, and hardware cost can be higher depending on the deployment.

That is why the right question is not whether event cameras will replace standard cameras. The better question is where their sensing model creates a measurable advantage.

From technology to deployment

As the ecosystem matures, event-based development is becoming more practical. Commercial sensors and evaluation kits are available from vendors such as Prophesee, Sony, and iniVation, and embedded development paths are emerging on platforms such as Raspberry Pi 5 and NVIDIA Jetson, where RidgeRun published the open source driver for NVIDIA Jetson Orin NX (link). Software support is also expanding through tools such as OpenEB and the Metavision SDK, which provide access to event streaming, processing modules, visualization, recording, and application development workflows.

For product teams, that lowers the barrier to evaluating whether event-based sensing can improve a real system rather than remain a research curiosity.

Learn More in the RidgeRun Event Cameras Developer Guide

If you are evaluating event-based cameras for a new product, the best next step is to review RidgeRun’s Event Cameras Developer Guide. The guide covers the technology overview, hardware options, software ecosystem, application domains, Raspberry Pi bring-up, NVIDIA Jetson integration, Jetson driver details, and benchmarking results for the Prophesee GENX320 platform.

That makes it the best entry point for engineering teams that want to understand both the technology and the practical integration path. Read more here: https://developer.ridgerun.com/wiki/index.php/Event_Cameras_Developer_Guide

Final thoughts

Event-based cameras represent a meaningful shift in machine vision. They capture change instead of frames, prioritize temporal precision instead of dense static imagery, and offer real advantages in dynamic environments where speed, lighting robustness, and efficient data handling matter most.

They are not the right fit for every workload. But for the right application, they can unlock capabilities that frame-based systems struggle to deliver efficiently.

For customers and partners exploring next-generation embedded vision, event-based cameras are not just an interesting idea. They are a practical technology option and with the right driver support, platform integration, and application development, they can become part of a real product roadmap.